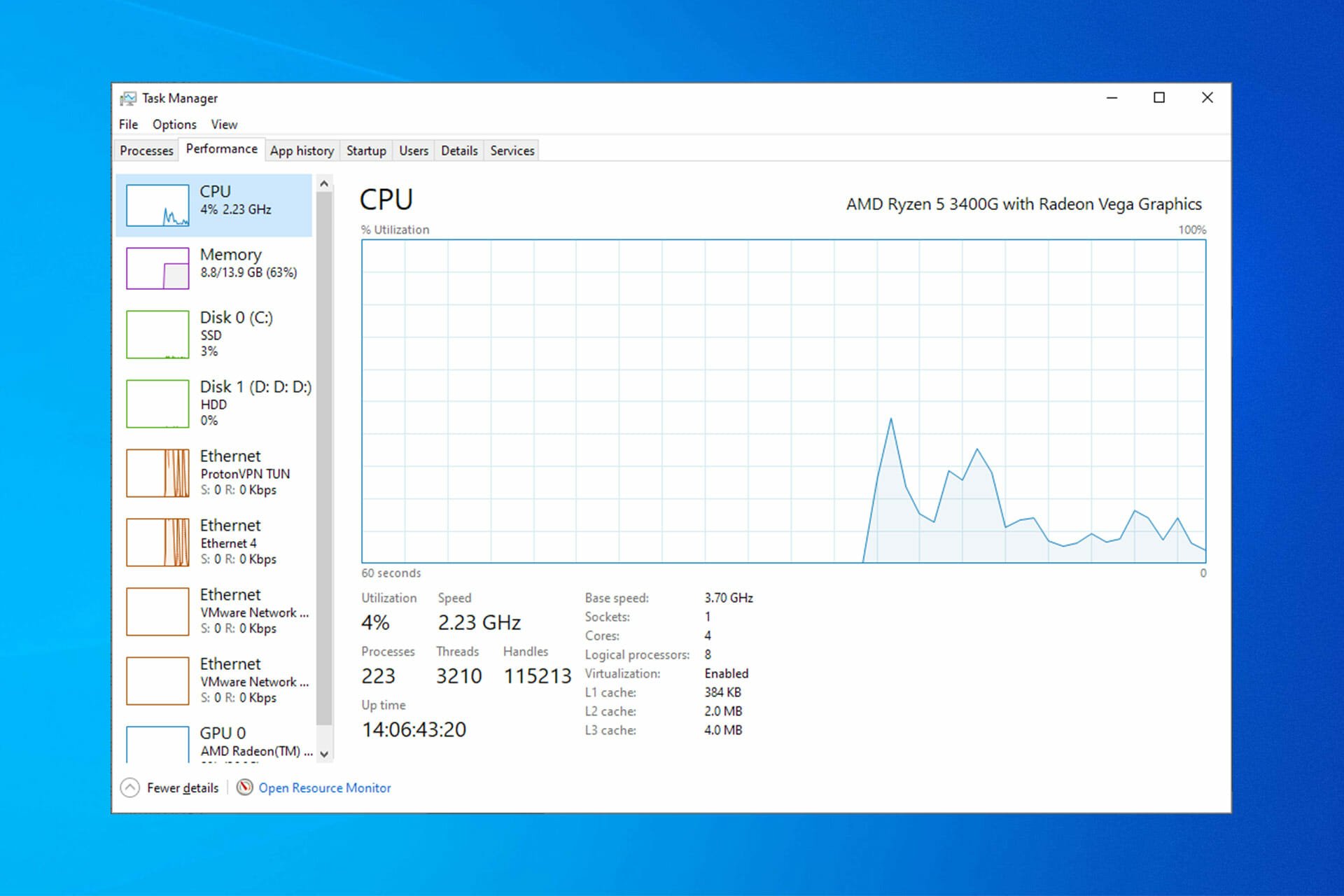

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

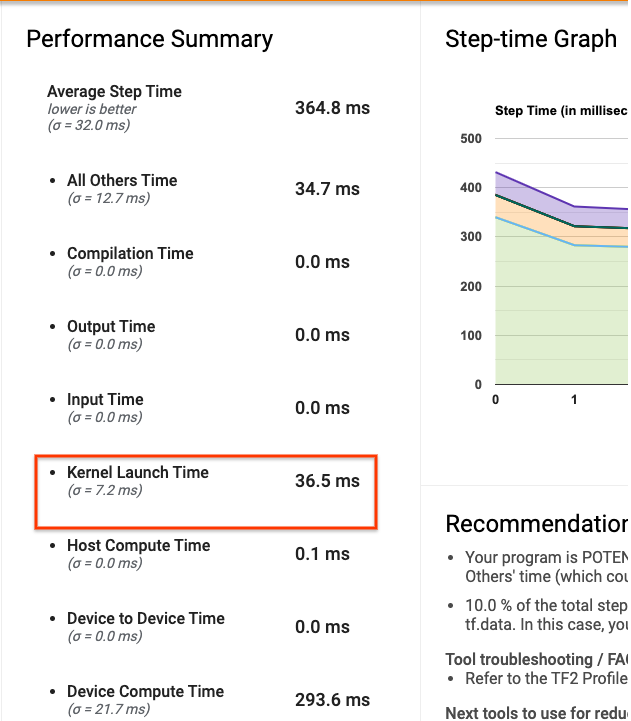

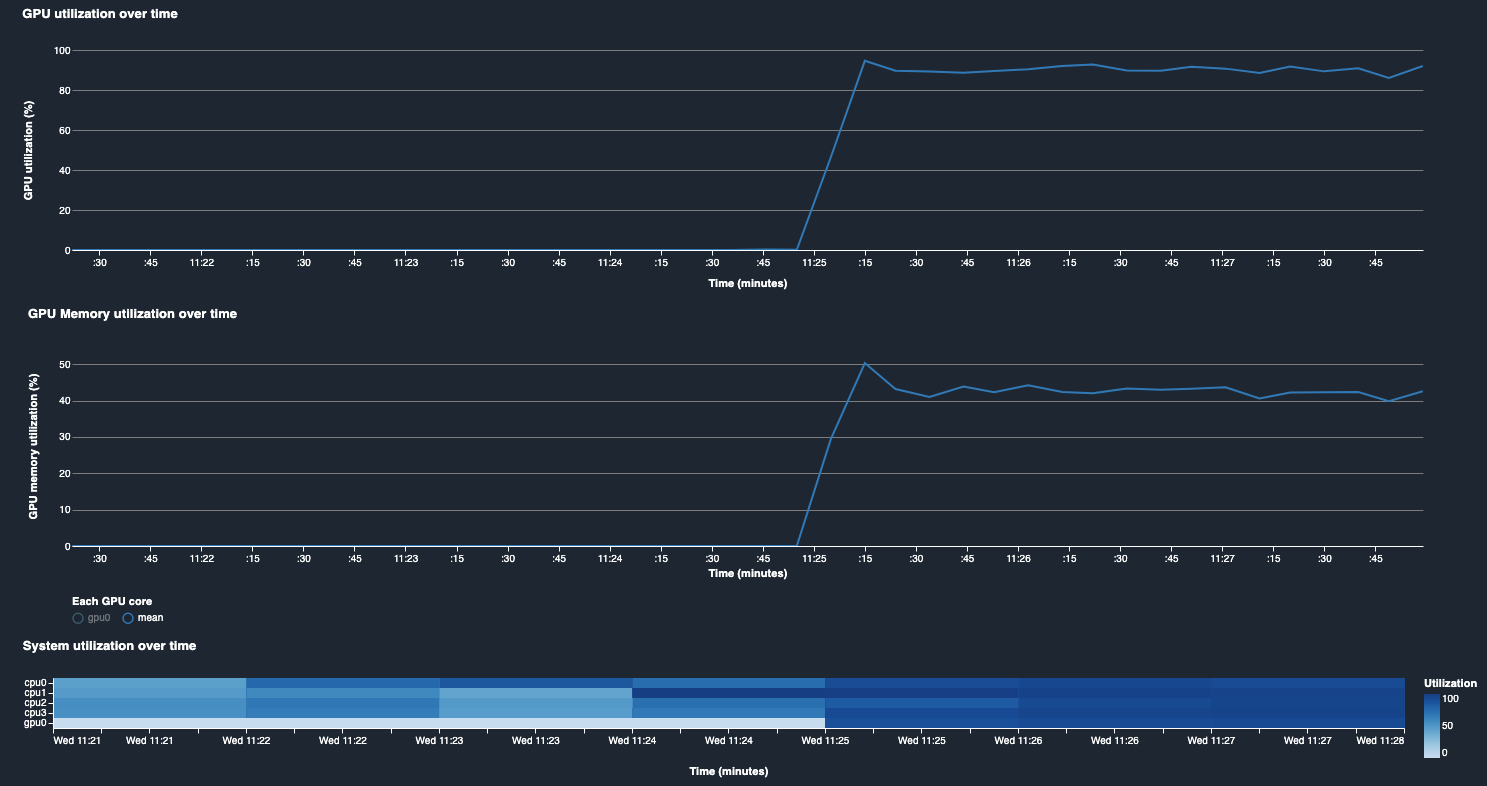

How to identify low GPU utilization due to small batch size — Amazon SageMaker Examples 1.0.0 documentation

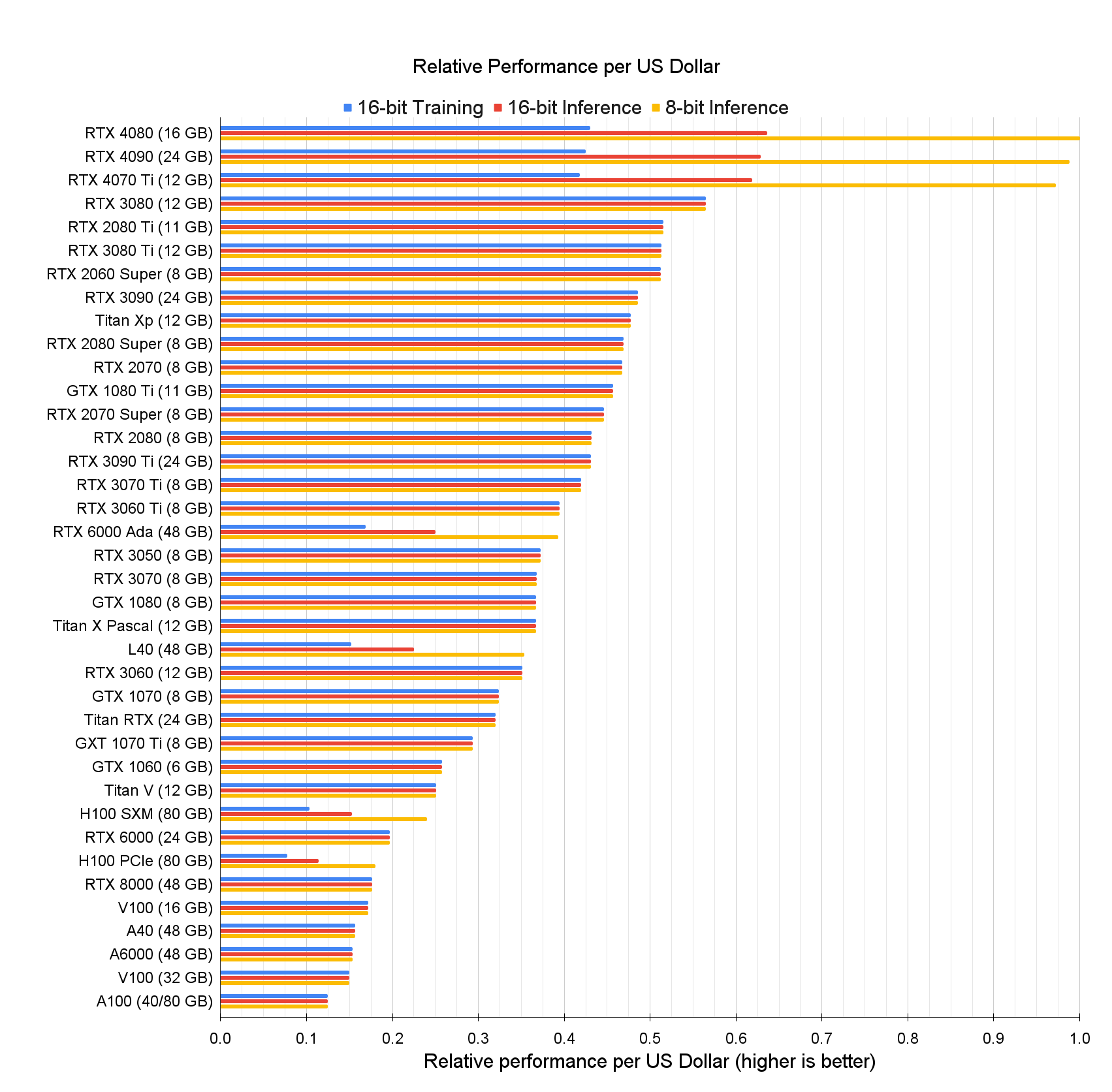

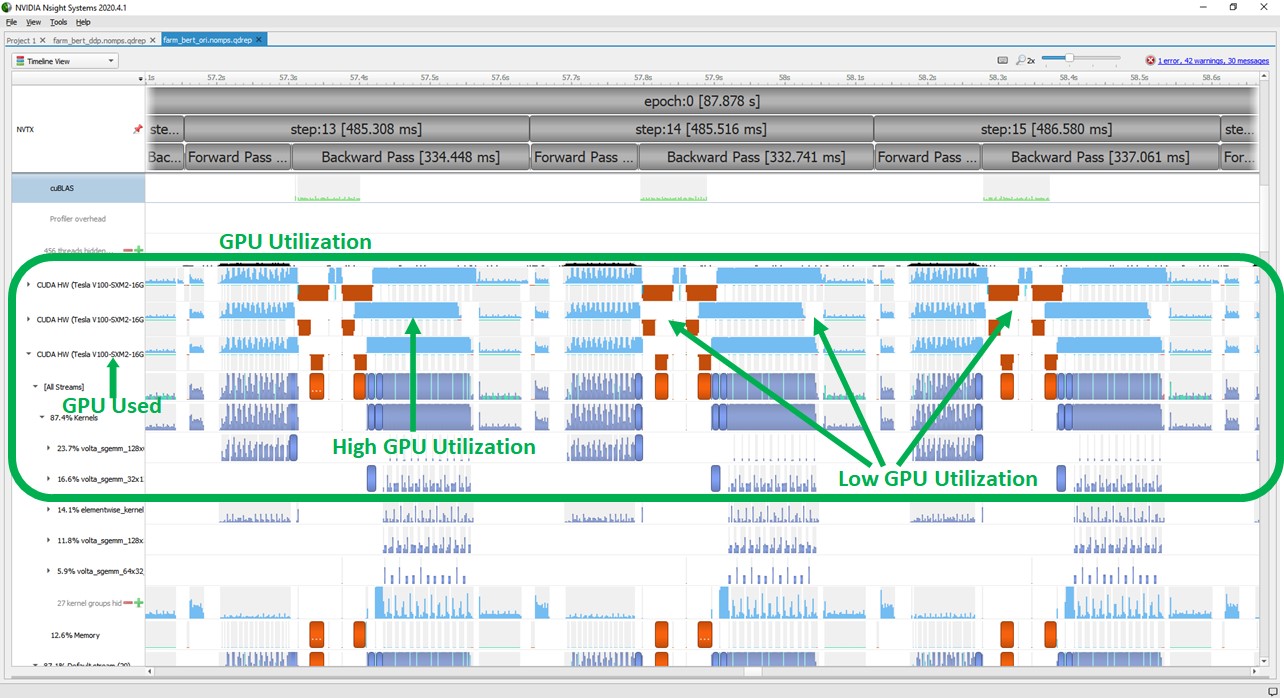

Deepset achieves a 3.9x speedup and 12.8x cost reduction for training NLP models by working with AWS and NVIDIA | AWS Machine Learning Blog

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science