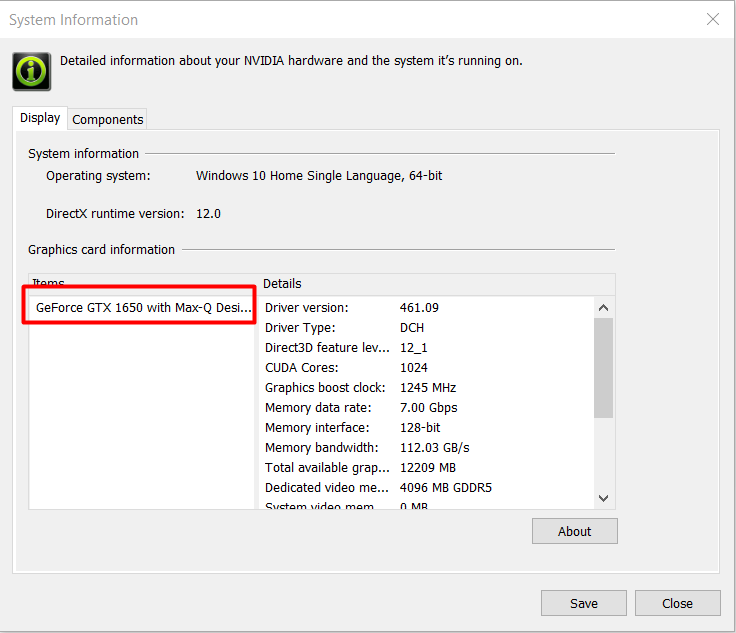

Step-By-Step guide to Setup GPU with TensorFlow on windows laptop. | by MANISHA TAKALE | Analytics Vidhya | Medium

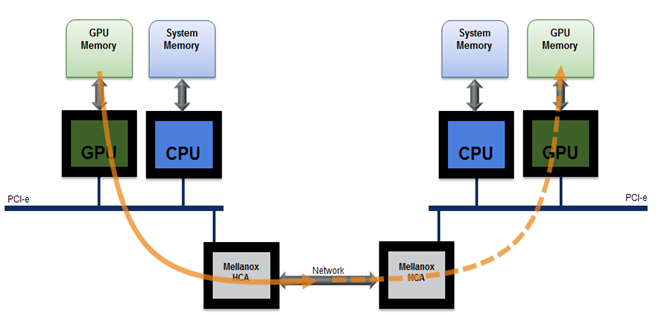

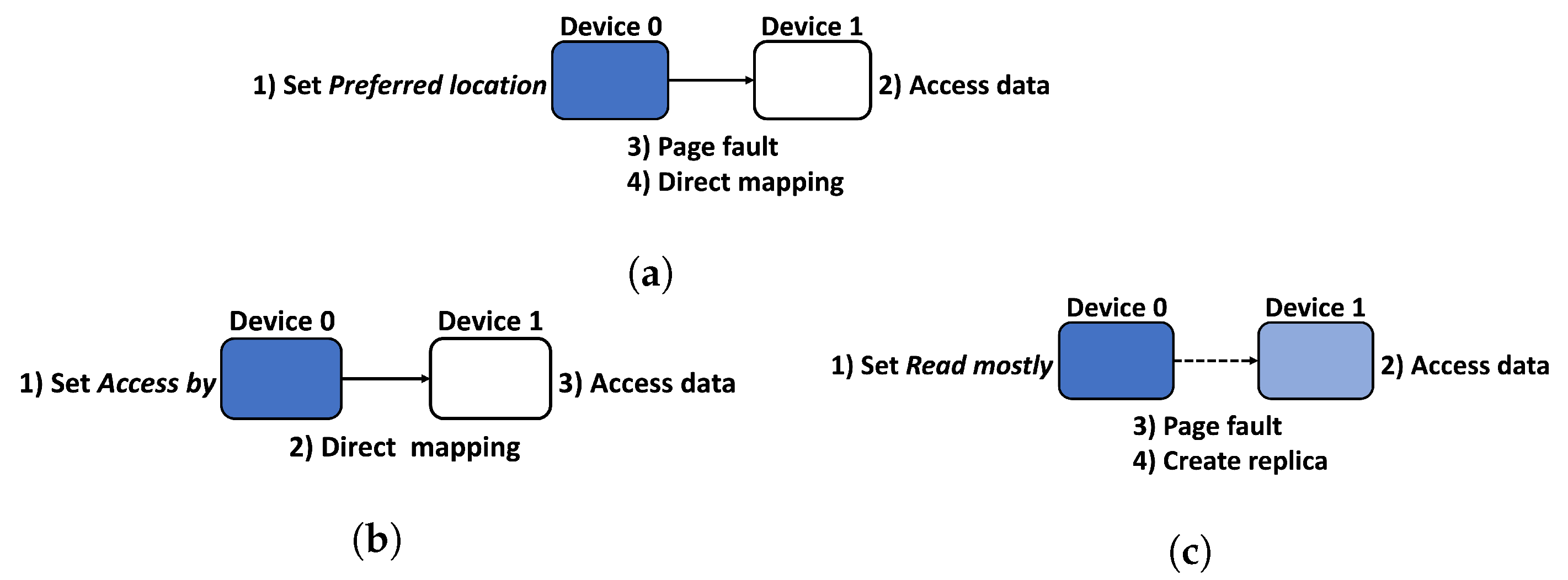

Applied Sciences | Free Full-Text | Efficient Use of GPU Memory for Large-Scale Deep Learning Model Training

Powering the next generation of trustworthy AI in a confidential cloud using NVIDIA GPUs - Microsoft Research

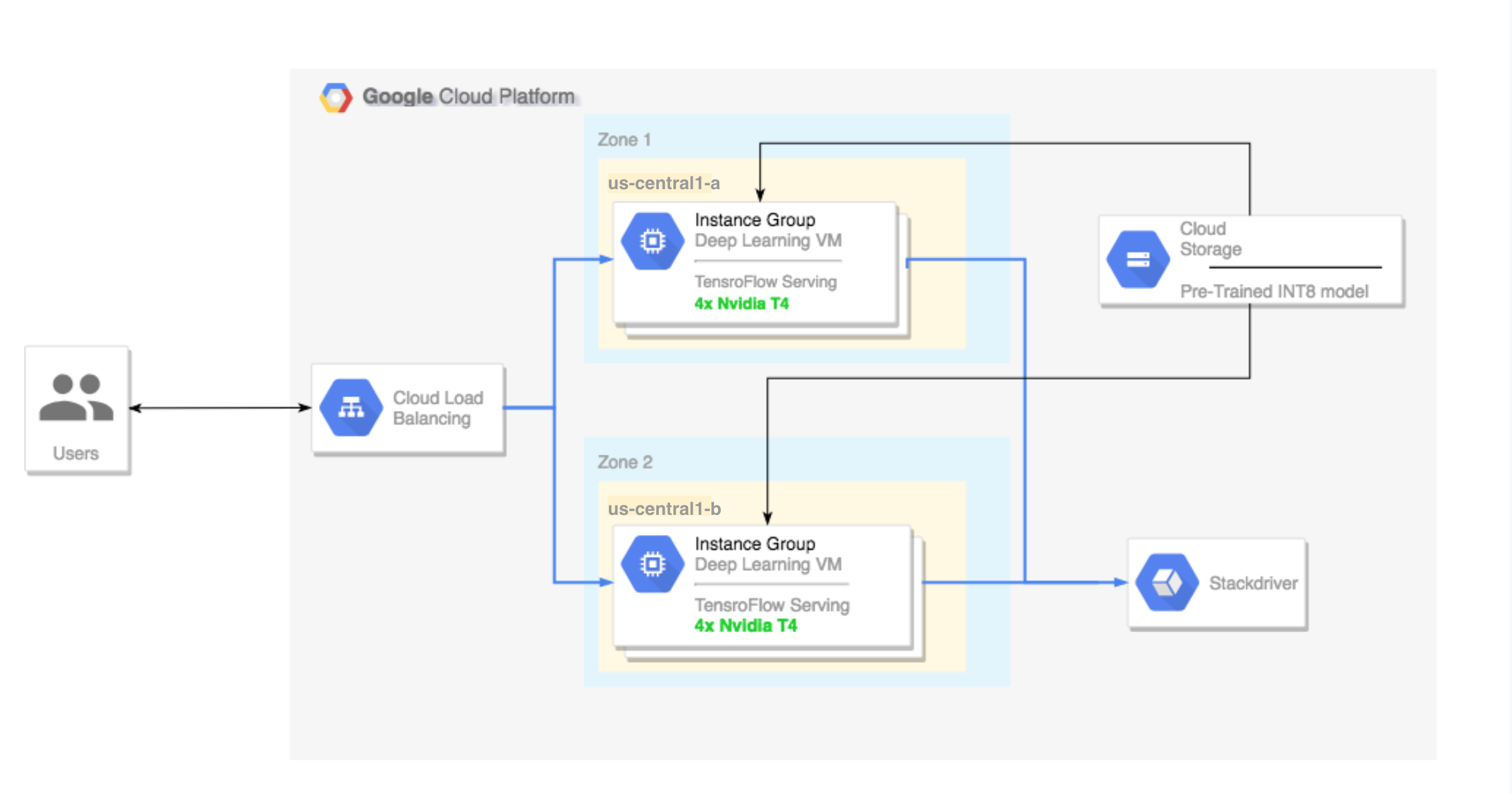

Running TensorFlow inference workloads with TensorRT5 and NVIDIA T4 GPU | Compute Engine Documentation | Google Cloud

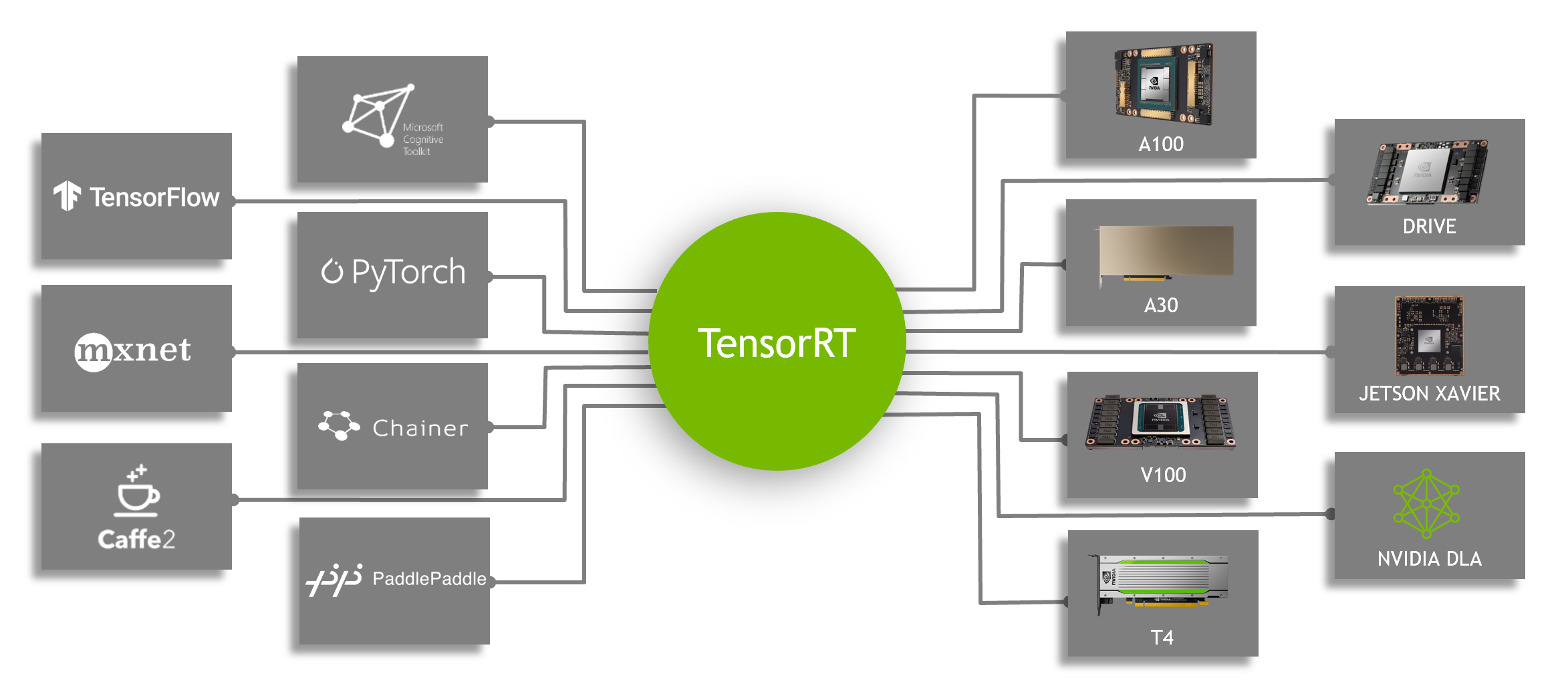

Speeding Up Deep Learning Inference Using TensorFlow, ONNX, and NVIDIA TensorRT | NVIDIA Technical Blog

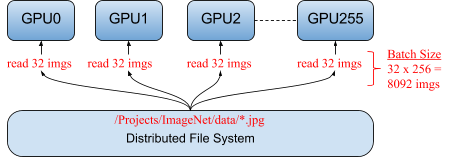

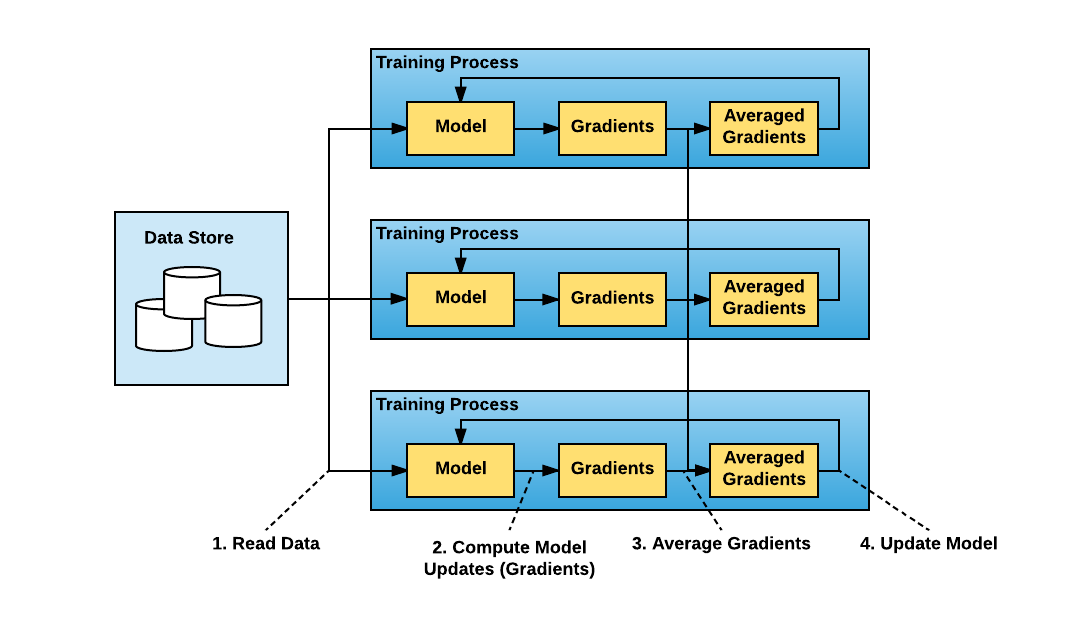

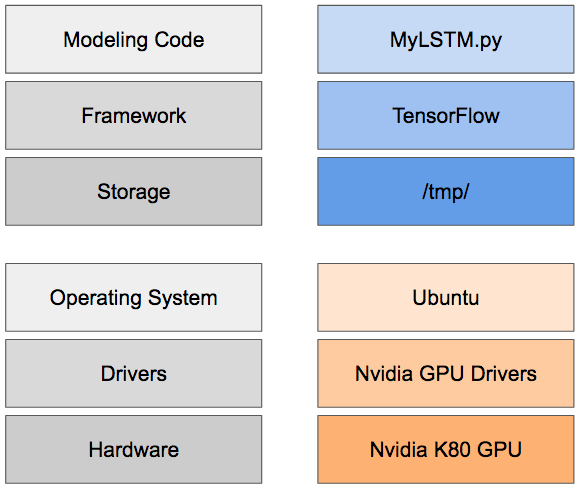

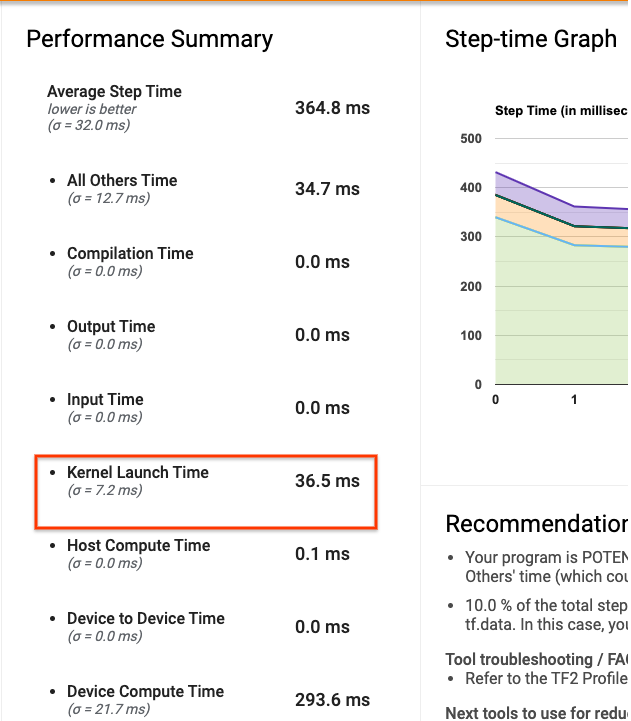

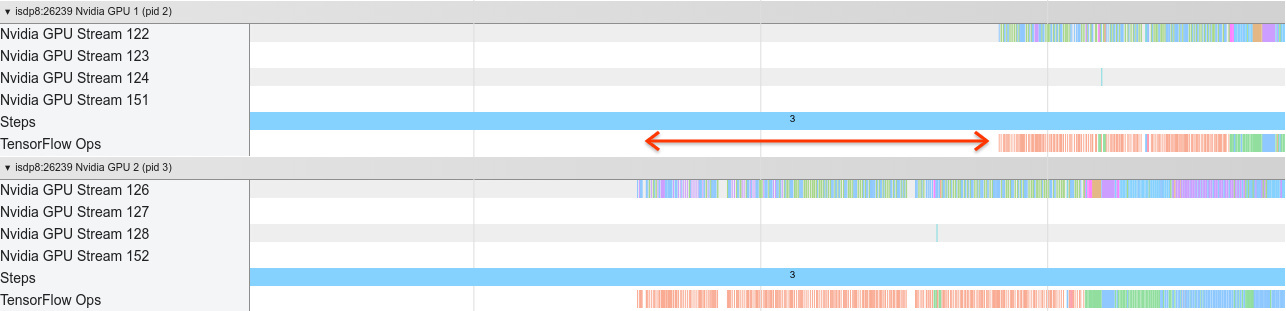

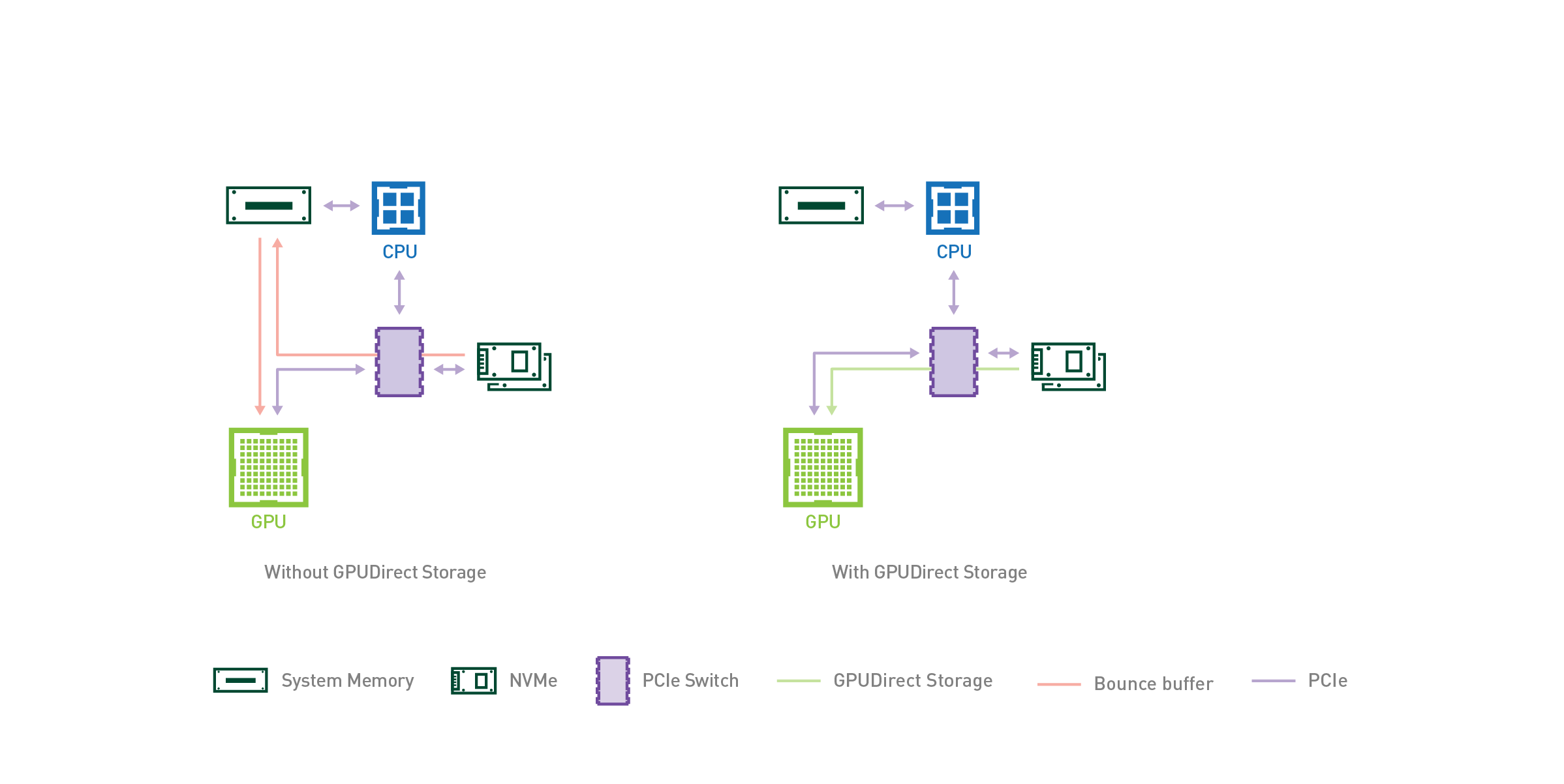

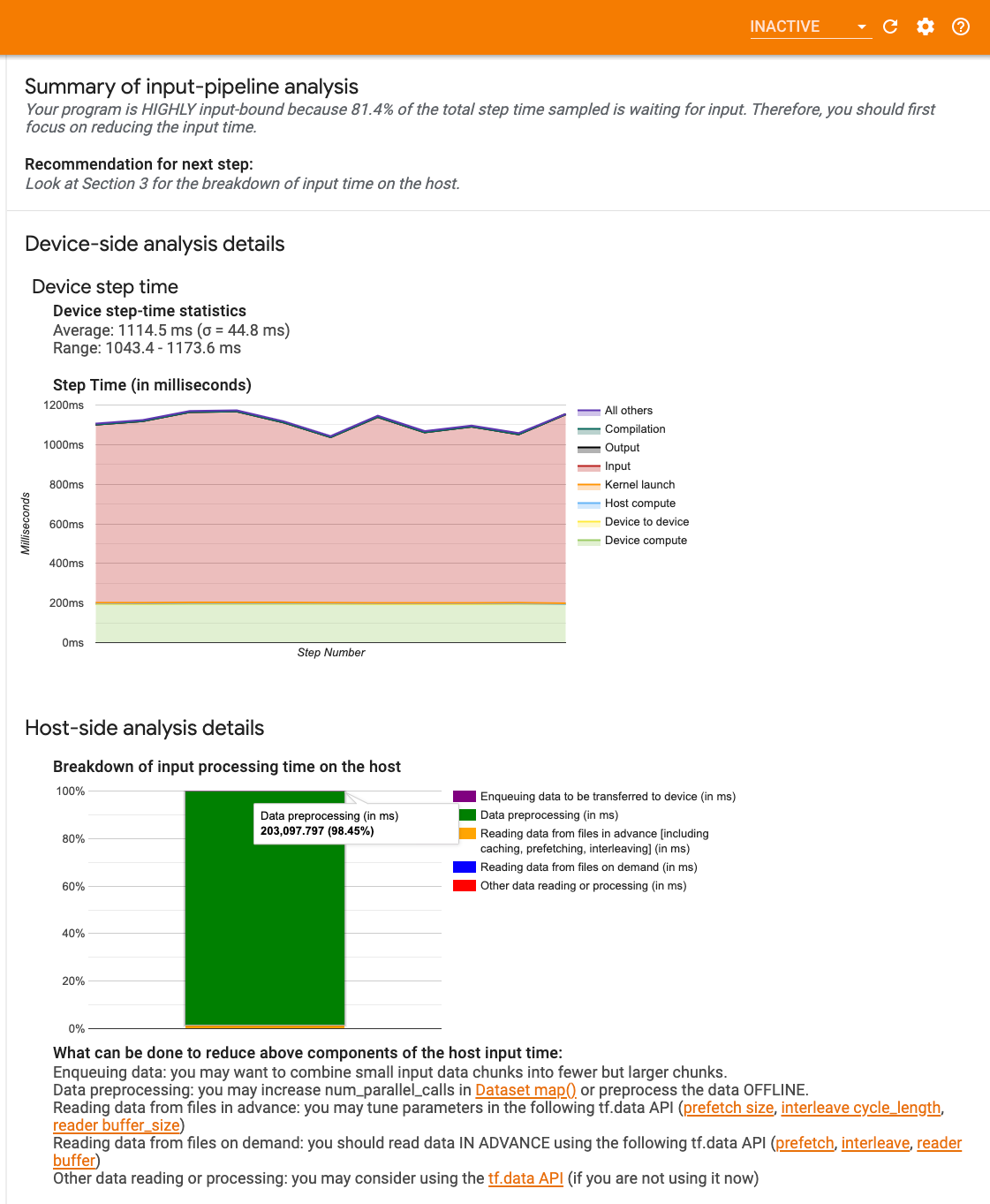

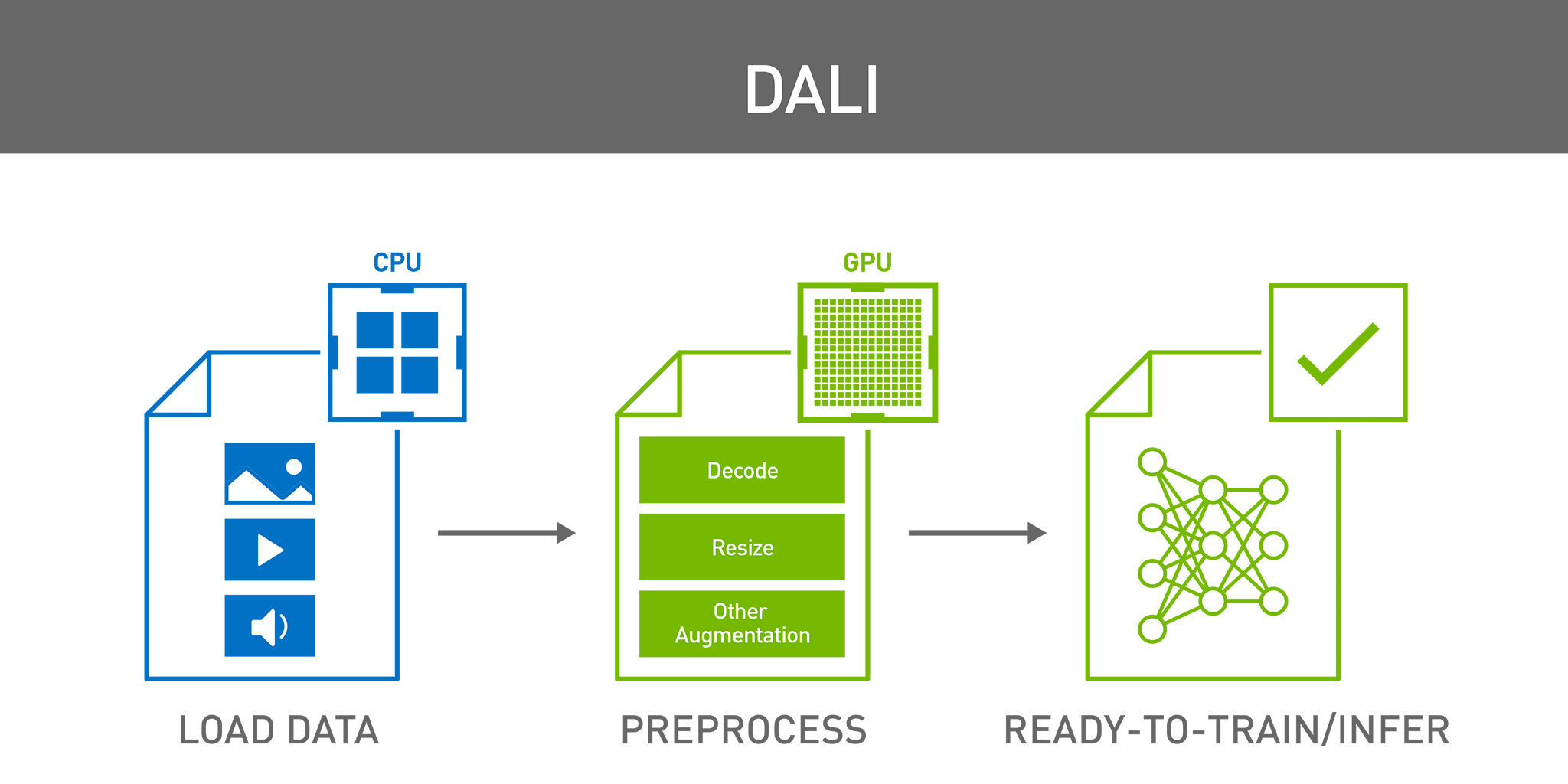

Optimizing I/O for GPU performance tuning of deep learning training in Amazon SageMaker | AWS Machine Learning Blog